The Engine Is the Contract: A Process Engine for Agents, From First Principles

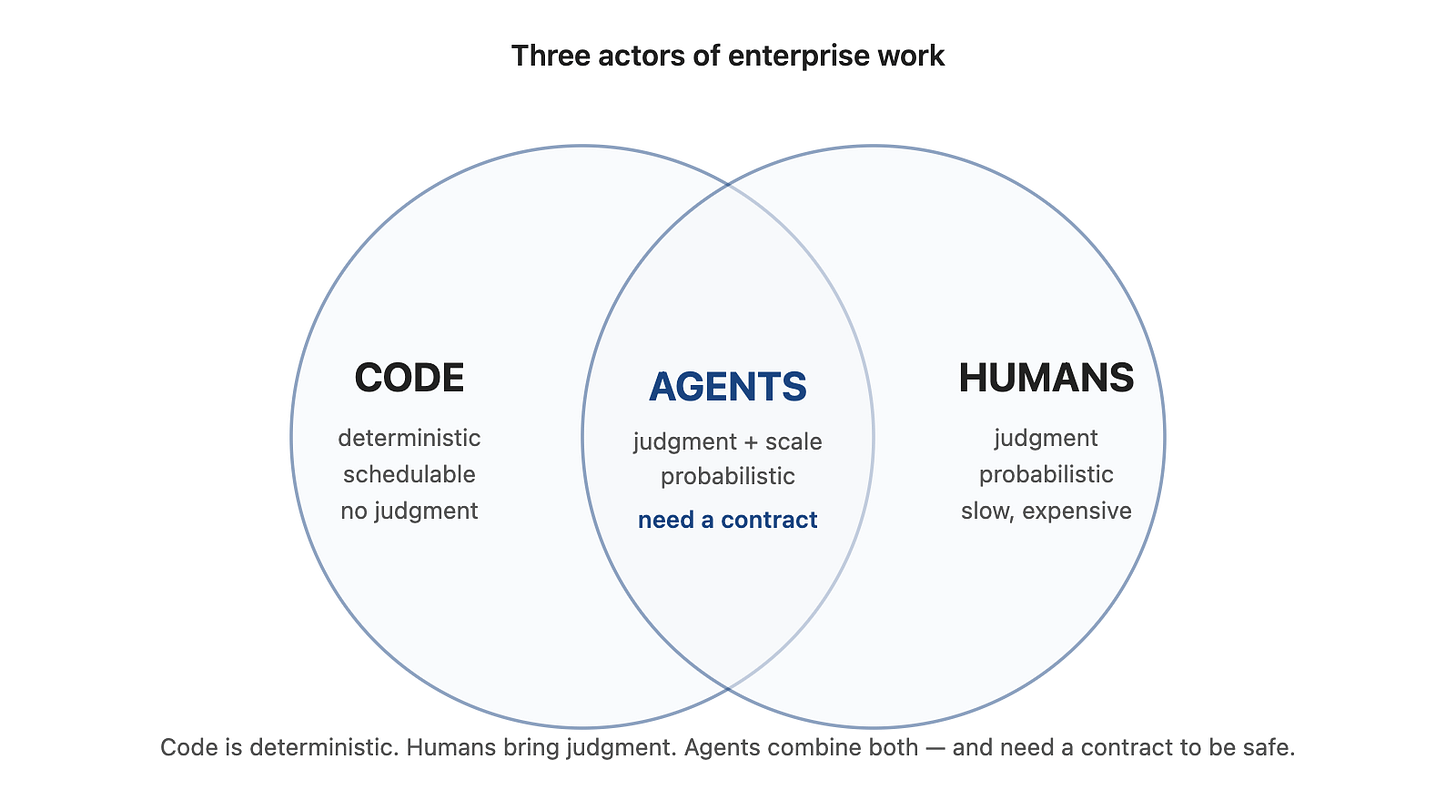

Process engines were built for two actors: code and humans. Agents are the third — and they need a contract the engine, not the model, can hold.

TL;DR. I came at this from two directions: years of content and process work, where engines could route and audit but not judge, and years of autonomous-agent work, where agents could reason but sometimes forgot, drifted, or overreached. Agents are a third actor — probabilistic like humans, schedulable like code, safe only when bounded by a contract. Vertesia Process Engine is our answer: a runtime where agents can author, execute, and supervise work, while the engine — not the model — holds the line. (Shipping in the upcoming Vertesia Platform release.)

I wanted to look at the process engine from first principles in the AI era.

Not “how do we add an AI node to a workflow builder?”

Not “how do we wrap governance around an agent framework?”

The initial thinking was:

If agents are now part of enterprise work, what does a process engine need to be?

I came to that question from both sides.

I have spent years around content and process platforms. At Nuxeo, ECM and BPM were two sides of the same product. The work was usually some version of a document moving through decisions: contract review, invoice approval, vendor onboarding, case management, loan origination, records retention. The canonical enterprise workflows.

The process engines of that era were built around two actors. Code, which executed deterministic steps and could not override the path. Humans, who handled judgment, exceptions, and approvals through gates the engine paused for.

That model held for a long time. Most engines I’d worked on or alongside — BPMN suites, workflow engines, ECM systems, case management platforms, state machines, low-code builders — fit inside it. The engine could route, pause, escalate, retry, and audit. But the judgment step always leaked back to a human. Someone still had to read the document and decide.

Then, at Vertesia, we spent years building autonomous agents in different forms: open-ended workers, tool-using assistants, bounded agent nodes, multi-agent workflows. Those systems could reason through work that used to require a human. But they exposed the other side of the problem: agents sometimes forget instructions, drift from the intended path, improvise where they should not, or fail to preserve properties the business needs enforced.

That was the design tension.

Process engines preserve guarantees but historically could not reason.

Agents can reason but do not, by themselves, preserve process guarantees.

The question became: what engine gives us the best of both worlds?

Control is deterministic. Behavior is probabilistic. The boundary between them is a contract, and the engine owns the contract.

That is the best-of-both-worlds version. Agents get room to reason where reasoning creates value. The process engine enforces the properties the business cannot leave to model behavior.

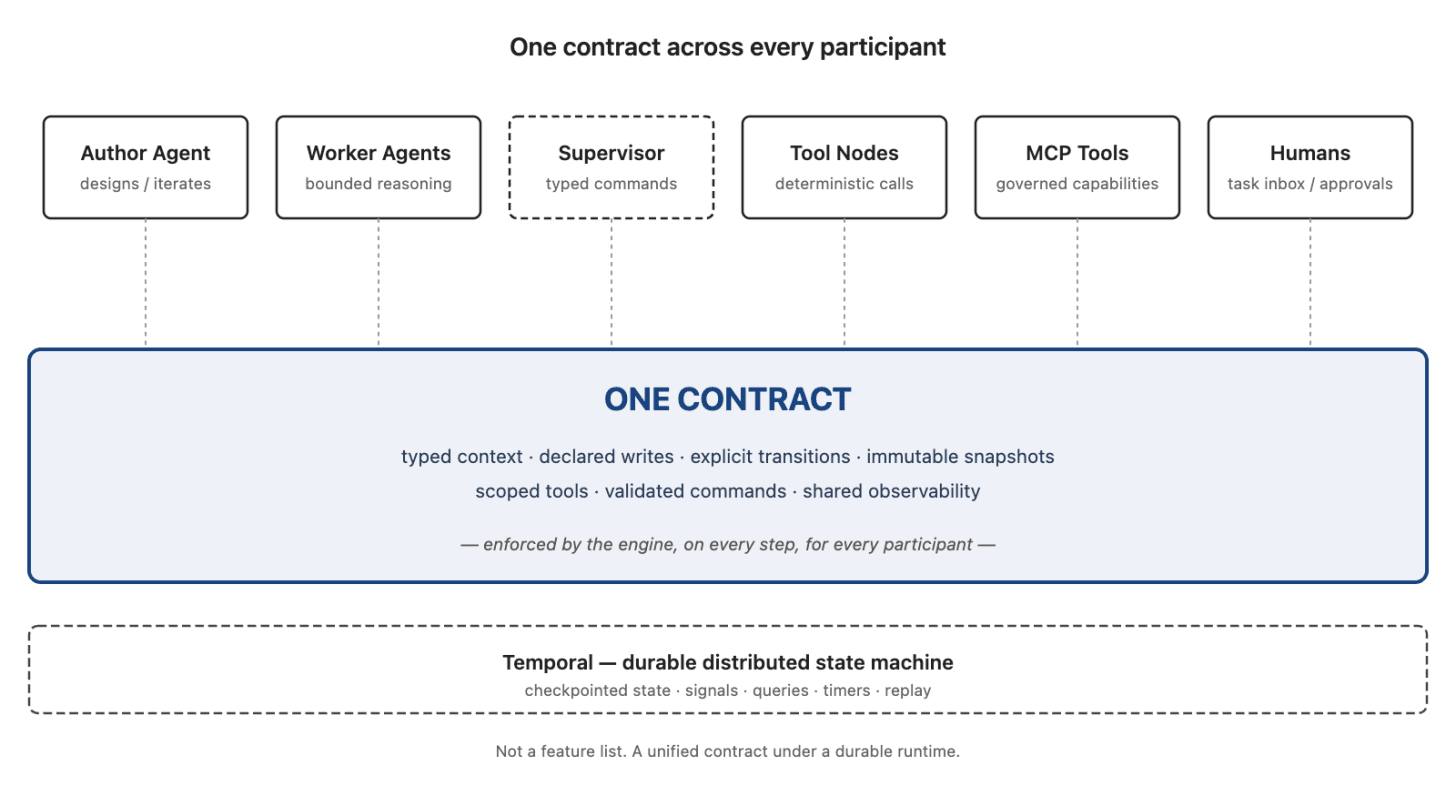

This essay is what came out of taking that question seriously. The result is Vertesia Process Engine: a full process engine where agents can author processes, execute bounded work inside nodes, and supervise runs through typed commands — while the engine remains the trust anchor.

It has three agent-facing seats around a durable process engine. The process is authored by an agent, executed with agents inside its nodes (on a battle-tested durable substrate), and, when the run demands it, supervised by an agent that can steer the flow without breaking it.

The engine itself is the only part that is not an agent. That is the point: agents can author, execute, and supervise, but the engine owns the invariant.

A process engine is not just a visual workflow editor. It is, in effect, the runtime contract for work that spans state, decisions, human intervention, retries, subprocesses, versioning, and auditability. Once agents become part of that work, the contract has to change. The engine has to know what agents are allowed to read, what they are allowed to write, where they are allowed to route, when humans can intervene, and how a run survives over time. This is not an AI node bolted onto a workflow builder. The primitives changed because agents are load-bearing actors: authoring, writes, transitions, supervision, validation, and runtime durability.

What the Traditional Model Couldn’t Close

BPM and ECM converged decades ago for a reason. Most serious business processes are about content — a contract, an invoice, a case file, a policy document, a claim. ECM gave the content a home; BPM gave the decisions a sequence. Together they automated everything except the part where someone had to read the document and judge.

That gap had operational consequences. Process throughput was capped by reviewers. The engine could route a contract to legal in under a second; legal took three weeks. One reviewer on vacation parked an entire approval batch. Many serious BPM projects ended up with a human task queue that grew faster than the automation.

Vendors tried to close the gap in a dozen ways. Rules engines. Content classification. Template-based extraction. RPA bolted onto the end. Each one moved the line a few percentage points and left the core wall standing — because none of them could reliably read a document, judge the case, and remove the human decision step across enough of the workflow.

That’s the wall agents move. Which is why the engine needs to be redesigned, not refitted.

The Two Failure Modes

Before the design, the shape of the current wrong answers.

Most workflow tools still treat the AI model as a service call with a funny return type. You draw the flowchart, drop in an “AI node,” and the AI is a step like any other — it takes inputs, returns outputs, has no standing to influence the flow. This is safe. It is also the reason these systems feel disappointing when the work is genuinely hard. The moment a case needs judgment that doesn’t reduce to an input/output mapping, the AI is in the wrong seat.

Many agent-framework patterns let the AI model drive too much, including parts where determinism matters. You hand the agent a goal, a tool catalog, and hope. This is powerful in demos and terrifying in production. Nothing stops the model from deciding the compliance step is unnecessary today. Nothing forces the same case to route the same way twice. Nothing audits.

Real enterprise work lives between them. Contract review, invoice approval, vendor onboarding, document intake pipelines, fund operations — these are processes with typed state, deterministic routing, human approval gates, and steps where agent reasoning earns its keep. They need both planes at once, on the same run, without the seams showing.

The Contract in Practice

The thesis sentence sounds abstract. Here is what it means concretely.

In a contract-review process, an agent node may be allowed to write risk_score, risk_explanation, and legal_review_required. It may not write approved, payment_released, or final_status. The engine can route on the fields the agent produced, but the agent does not own the business transition. That distinction is the system.

Every design decision follows. The engine owns transitions, guards, routing, context validation, versioning. Agents operate inside nodes, bounded by a schema the engine enforces. The contract between them — the writes declaration on each node — is the single most load-bearing idea in the system.

The rest of this essay is how a single sentence turns into an engine.

First Leg: AI-Powered Authoring Assistant

There is a dilemma at the center of every visual process tool.

BPMN editors give real expressive power — typed state, structured parallelism, event-based gateways, compensation handlers. BPMN is powerful, but operationalizing it usually requires specialized expertise. The palette has forty-plus element types, the diagram grows faster than the process itself, and training is not optional.

Low-code builders go the other way. The UX is friendly, the demo lands in ten minutes, and the expressive ceiling arrives on day three. You hit a case that needs a typed branch on an agent’s output, and the builder either doesn’t support it or supports a degraded version that hides in a settings panel. You end up with a process that looks simple and behaves mysteriously.

There is no middle. Most visual editors end up as a compromise between power and accessibility, and the compromise only moves in one direction at a time.

An AI model authoring against a well-designed native format dissolves the tradeoff.

The Vertesia process definition is designed for execution, understandability, and strict validation. It has no layout, no coordinates, no visual grammar — those are concerns for the view, not the contract. That the format is also a natural target for AI models is a consequence, not a goal. The same discipline that makes a definition precise for a runtime — typed, validated, free of implicit state — is what makes it legible for a model reading and writing against it. The mechanics, in the end, are incidental. The discipline is what matters.

Authoring happens through an AI assistant. The human describes intent — “add a legal review step for contracts over fifty thousand dollars, route rejects back to renegotiation, notify the submitter on approval” — and the assistant writes the definition. Edits are the same surface. “Make the approval go to the finance group instead of legal” mutates the definition; the editor shows the diff; the engine runs it.

The wiring problem

The hardest part of process authoring is often not drawing the nodes. It is wiring them.

A process designer has to know what each node expects, what the previous node produced, which field maps to which parameter, what should be written back into context, which output is safe to route on, what can run as a deterministic tool call, and what genuinely needs an agent. That is where process design usually becomes implementation work — and where most low-code builders quietly fall apart.

MCP changes that surface.

An MCP server does not just expose a black-box API. It exposes tools with descriptions, input schemas, and capability metadata. The tool surface is legible to an AI assistant.

So when the assistant wires a process, it does not need the human designer to know every API parameter up front. It can inspect the available MCP tools, read the schemas, infer the mapping from process context to tool input, propose the node configuration, and validate the result against the process definition. Same loop for output — what the tool returns becomes a candidate for the node’s writes, validated against the process schema before the engine accepts it.

That is a meaningful shift. The assistant is not merely helping draw a diagram. It is helping translate intent into executable wiring.

If the step is known, the assistant can wire a deterministic tool node — no model in the path at runtime. If the step requires judgment, it can expose the same tool to an agent node. In both cases, the capability enters through the same governed process contract.

Many ways in

The intent surface is not limited to prose.

The assistant can build a process from a screenshot of an n8n workflow a customer already has — the diagram is the spec, and the assistant translates each node into the native format, picking the right node type (interaction, agent, human task, subprocess) for each step.

It can build from a whiteboard photo — someone sketches the approval flow in a meeting, takes a picture, drops it in. The assistant produces a first-draft definition in minutes.

It can import BPMN — the customer brings their existing process library, and the compiler lowers each diagram into the native format, preserving the original BPMN lineage in the definition’s provenance metadata so the diagram still renders and the audit trail is intact.

None of that is a trick. A format optimized for visual editing (BPMN 2.0 XML, shape coordinates, swim lanes) drags its rendering concerns into the runtime contract. A format optimized for a runtime contract renders just fine when you want to look at it. AI models author well against the second shape because it is the shape a rigorous runtime already demands of itself — explicit, typed, testable.

The editor still exists. It is the viewer, not the primary authoring surface. It shows the graph, lets a user click a node to inspect its config, and lets the assistant propose edits the human reviews. The power ceiling is the engine’s ceiling. The accessibility ceiling is whatever the assistant can understand — and that ceiling has moved high enough to change the authoring surface.

Second Leg: Battle-Tested Durable Executor

A run is a Temporal workflow walking the definition node by node.

At each node, four things happen in order:

The engine enters the node and publishes an event.

The node body runs. For a tool node, that’s a function call. For an interaction node, a single-shot AI call with a schema. For an agent node, a child conversation workflow that can use declared tools. For a human task node, a task is created and the workflow parks. For a subprocess node, a child process workflow starts.

The node returns a context update and, optionally, a transition signal.

The engine validates the update against the

writescontract, applies the diff, evaluates the guards on the declared transitions, and picks the next node.

Step four is the one that matters. The engine never applies a context update the node did not declare the right to write. It never routes on a path the definition did not declare. It never executes a node that is not in the snapshot.

Process definitions are versioned contracts. A run executes the snapshot it started with. New edits create new definitions for future runs, not silent mutations of work already in flight. Publishing a revision is safe because there is no lurking question of which in-flight runs will see the change. New starts see new; old continues old.

The writes contract

Every node declares which context fields it may change. For an agent node, the engine does something specific with that declaration: it builds a result_schema by intersecting the node’s writes list with the process’s context schema, and hands that schema to the child conversation as the agent’s required output shape. The accepted output cannot contain fields outside the declared set — if the model tries to produce them, the engine rejects the update before it becomes process state. When the child returns, the engine validates again before applying anything.

The consequences are what make the rest of the design possible:

Agent impact is bounded. A contract-review agent that handles 20,000 runs a week cannot accidentally write

total_valueon a node that only declareslegal_decision. The engine refuses the update. The run either repairs or fails — not silently drifts.Routing is trustworthy. A guard that checks

total_value > 50000can rely ontotal_valuebeing whatever the node that was declared to write it put there. Upstream nodes cannot have clobbered it.Debugging is crisp. The per-node context diff in the run inspector shows exactly what each node changed and nothing else. A regression is located by reading the diff, not by bisecting a prompt.

Keep writes tight. Three named fields is better than a blob.

Transitions

Transitions have explicit triggers:

auto— the engine picks the first transition whose JSON Logic guard matches the current context.agent— the agent node returned a_next_nodefield, picked from an enum the engine built from the declared agent-triggered transitions. The model can only pick from the declared set, and only with a value the schema accepts.user— a human signal drives the move, via the Task Inbox or a supervisor command.

The engine never parses prose to decide where to go next. If you catch yourself writing a prompt that says “then decide whether to do X or Y,” lift the decision into a guard and make the node return the signal the guard needs.

Agents may choose from declared exits. They do not invent exits. That is the whole routing safety model in one sentence.

Durability and coordination

The engine runs on Temporal. That is not an implementation detail — it is the piece of infrastructure that makes every other claim in this essay credible.

A process run is a Temporal workflow: a real distributed state machine with checkpointed state, signals, queries, timers, and replay as first-class primitives. A worker can die; workflow state survives. Another worker resumes from the next durable boundary, with retries, signals, timers, and replay handled by the orchestration substrate. A cluster redeploys without losing workflow state. A human task waits three days; the workflow parks, and when the signal arrives it resumes as if no time had passed.

The engine does not distinguish between “waiting three seconds for an API” and “waiting three days for a reviewer.” Both are the same primitive. The same operational model. The same audit trail.

This is the part of the stack that is easy to underestimate. Shallow checkpointing means a mid-run crash loses work. Bolted-on retries mean different node types behave differently. Custom state machines glued together with queues fall over at scale. Temporal is a proper coordination substrate — battle-tested at companies that run it for payments, logistics, and infrastructure orchestration — and the process engine sits directly on top of it. Durability isn’t a feature; it’s the floor.

The category split is worth being explicit about. Temporal is the durable execution substrate — workflow state, signals, timers, retries, replay. Vertesia Process Engine is the product-level contract above it: typed context, declared writes, explicit transitions, human tasks, subprocesses, scoped tools, MCP capabilities, supervisor commands, and shared observability.

For architects, that means redeploys without losing workflow state, uniform retry semantics across every node type, and the certainty that comes from a real distributed state machine rather than a home-grown one. For the business, it means processes that span the time real work actually takes — days, weeks, quarters — with no special casing, and an audit trail that doesn’t require a second system to produce.

Tools Are Process Primitives Too

Agent systems often treat tools as private implementation details of the agent runtime. A process engine for agents cannot.

In Vertesia, tools are first-class process primitives. The same tool system an agent uses inside a bounded reasoning node can also be wired directly into a deterministic process node — same registration, same governance surface, same observability. There is one capability layer, not two.

If a step is known — call an API, transform a file, fetch a record, update an artifact — it runs as a tool node. Deterministic, auditable, no model in the path. If a step requires judgment, an agent node can use the same tools inside its reasoning loop, bounded by writes and the result schema. You should not need an agent for every tool call. You should not need a separate workflow-integration layer for tools the agents already know how to use.

MCP is rapidly becoming the way applications expose themselves to agents: a self-describing interface for tools, resources, and application capabilities. That is useful, but exposing a capability to an agent is, indeed, only half the problem. Enterprise work also needs to decide where that capability belongs in a process, who or what can call it, what state it can change, how it is audited, and whether it should run deterministically or through an agent’s reasoning loop.

That self-description matters for authoring as much as execution. If the process assistant can inspect the tool schema, it can help wire the process: which context fields become parameters, which outputs should be written back, which node should own the side effect, and whether the capability should run directly or be exposed to an agent. In other words, MCP does not just expand the tool catalog — it gives the assistant a machine-readable integration surface for building processes.

This is where MCP fits into enterprise workflows the right way. MCP tools become governed process capabilities, not just chat-agent extensions. They register through the same substrate, get scoped to the processes and nodes that should see them, and are visible in the run history when they fire. Exposing a tool is not the same as governing it; the engine is what makes the second part possible.

MCP makes apps available to agents. Vertesia makes those capabilities governable inside processes.

Direct call vs. agent-mediated call

A small rule that captures the platform difference:

The process engine should not force every capability through an agent.

If the process knows what to call, call the tool directly.

If the process needs judgment about whether, when, or how to call it, give the tool to an agent node.

If an operator or supervisor needs to repair a run, expose the appropriate capability through the supervised command path.

Same tool substrate. Different control mode. The choice is a process-design decision — made by a human or proposed by the assistant — not an architectural one you have to commit to up front.

There is a performance argument here too. If the system already knows what has to happen, asking an agent to rediscover the next step is wasted latency and wasted cost. Deterministic tool nodes are faster, cheaper, and easier to audit. Agent reasoning should be spent where the path is genuinely uncertain or judgment is required.

Tool governance and side effects

Making tools available inside a process does not mean every node gets every tool. Tool access is scoped by process, node, role, and policy. A deterministic tool node can be given exactly the tool it needs. An agent node can be given a bounded toolset. MCP tools enter through the same governance path rather than becoming an unbounded escape hatch.

Durable execution does not remove the need to reason about side effects. Tool nodes that mutate external systems need idempotency, retry policy, and recorded outcomes. The engine can retry safely only when the side-effect boundary is explicit: what the tool intends to do, what it actually did, and what process context it is allowed to update.

Observability is part of the contract

Once tools, agents, humans, and an optional supervisor all participate in the same run, observability stops being a log stream attached to the side. It becomes part of the process contract: what node ran, what it read, what it wrote, which guard fired, which tool was called, which human approved, which supervisor command changed the path, and why. One run history. One audit trail. One inspector.

The point isn’t more tools. It is one capability surface shared by the process engine, the agent runtime, and the supervisor — under the same contract.

Third Leg: AI Supervisor

This is the novel seat, and the one that took the longest to get right.

Supervision is optional. When the flow is fully clear — every transition guarded, every decision deterministic, every contingency covered — you run in programmatic mode. The engine walks the definition, applies writes, picks transitions. No outer model involved. Predictable, cheap, auditable. That is the right mode for most production processes most of the time.

Run type is not a one-time choice. It shifts by phase and by case. A process under active design is often run supervised — the author watches the run, the supervisor handles the rough edges, edits go in, the loop tightens. Once the flow stabilizes, the same process deploys programmatic in production. A critical run in a new deployment might be supervised for a quarter, then pinned to programmatic once the operator trusts the flow. One process can launch as supervised for edge cases and programmatic for the common path.

So the useful question isn’t “supervised or programmatic?” but “is the flow clear enough for this case, at this stage, to run itself?” If yes, no supervisor. If not, supervise. The engine doesn’t mind either way.

When you do want the supervisor, it is for the cases where the flow isn’t quite enough. An agent node returned a structured result but the transition it implies feels wrong in the context. A human task timed out and someone needs to decide whether to escalate or skip. A guard didn’t match any declared transition because the data came back in a shape the definition didn’t anticipate. The process isn’t broken, but it needs judgment from outside.

That is what supervised mode is for.

A supervised run starts a long-lived top-level child conversation, the ProcessSupervisor, that receives structured events as the run advances: started, node_entered, node_completed, guard_failed, no_transition_matched, node_failed, human_task_waiting, user_message. Each event carries the current node, recent history, declared transitions, current context, and any failure metadata. The supervisor can respond with commands.

The command set is intentionally small:

continue— let the deterministic process keep going.set_context— propose a context repair. The engine validates the merged context against the process schema and refuses writes to reserved internal fields.transition_to— move the run through a declared transition, or through an explicit policy-allowed supervisor override that is validated and recorded.skip_node— skip the current node only when the definition or supervisor policy allows it, with the override recorded in the audit trail.retry_node— re-enter the current or a requested node.fail— fail the run with a supervisor-provided reason.

The supervisor can steer a run, but it cannot rewrite the process definition. It cannot add nodes. It cannot invent transitions. It cannot mutate reserved fields. It cannot escape the process snapshot. Every command is validated by the engine before it becomes state.

Three properties make this workable:

The supervisor sees what a human would see. Current node, recent history, context, declared transitions, failure reason. Same observability, same commands. This is not an AI whispering into the engine’s ear — it is an AI occupying the supervisor seat.

The worker agents inside nodes don’t know the run is supervised. They always operate in programmatic posture, constrained by

result_schemaandwrites. That invariant is intentional. Agents at the edge stay simple and auditable regardless of what is driving the outer loop.The safety boundary is the engine, not the model. If the supervisor asks to set a field outside the schema, the engine refuses and the supervisor sees the validation error on the next event. The engine does not trust the supervisor; it trusts the contract.

The combination is what makes supervised mode different from “letting the AI drive.” The model can drive — but only on the roads the definition has drawn.

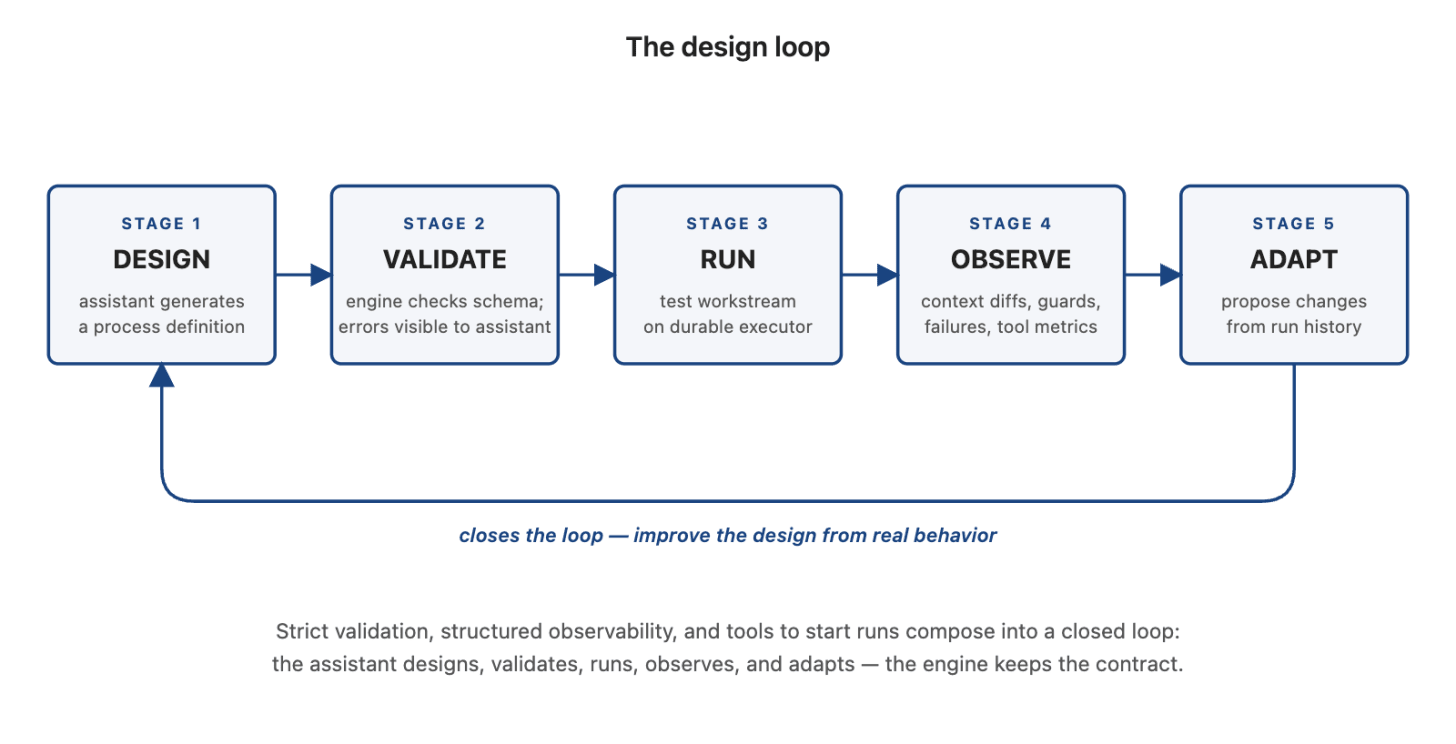

The Design Loop

The assistant doesn’t stop at generating a definition. It closes a loop.

Every definition is strictly validated — writes, transitions, guards, schema references. When the assistant produces a definition that doesn’t compile, it sees the validation errors directly and corrects them. The validator the engine uses at runtime is the validator that tells the assistant why its draft is wrong. No silent acceptance, no looks plausible, no hallucinated fields quietly surviving.

That is the first half of the loop: design, validate, correct, repeat.

The second half is what separates this from every other “AI helps you draw a workflow” demo. The assistant can run the process it designed. It starts a test workstream, watches the run unfold through the same observability surface a human operator sees, and reads the outcome — which nodes succeeded, which failed, where the context diverged from intent, which guards fired the wrong way. Then it revises the definition against what actually happened.

The loop extends into history. The assistant can look at past runs of a deployed process — how often a guard fired, which human-task branches dominated, where agent nodes produced marginal results — and propose optimizations. Consolidate two nodes that always co-occurred. Tighten writes on a node that never used three of its declared fields. Raise the threshold on a guard that hasn’t triggered in a year. Add a missing transition that keeps forcing supervisor intervention.

Design. Run. Observe. Adapt. A closed loop where the thing that designed the process is the thing that learns whether its design worked — and can try again with what it learned.

This is not a product demo. It is what falls out, naturally, of three properties already in the design: a definition format that is strictly validated, a runtime that produces structured observability, and an assistant with the tools to start runs and read their results. Stack them up and the loop is emergent, not bolted on.

The engine’s job, again, is to keep the contract. The assistant’s job — now — is to improve the design against real behavior. Humans stay in the loop to approve changes, set direction, catch what matters. But the grinding iteration that used to fall on process engineers — the weeks of tweaking guards, reviewing failure reports, proposing process improvements — is a thing the assistant can do, continuously, at a scale no human team would match.

BPMN, Honestly

People ask if the engine supports BPMN. The honest answer is: as an import format, yes. As a runtime contract, no.

BPMN is rich. It is also twenty years old, shaped by the tools that render it, and full of execution semantics that don’t compose cleanly with agents — event subprocesses, compensation, escalation boundaries, intermediate throw/catch pairs. The moment an agent has to participate in those semantics, the runtime starts shipping behaviors the agent can’t reason about and the engine can’t audit cleanly. A native format can pick the subset that actually matters for mixed agent-and-deterministic work and leave the rest.

The BPMN compiler lowers BPMN into the native format. Supported patterns compile cleanly — sequence flows, XOR gateways, parallel split/join (mapped to the native branch node), multi-instance loops (mapped to foreach), human tasks, subprocess calls. Unsupported patterns produce compiler diagnostics rather than silent degradation. The BPMN source is kept alongside the native definition so the original diagram still renders and the lineage is auditable.

This is the right treatment. Customers with existing BPMN libraries can bring them. Customers authoring fresh can skip BPMN entirely and let the assistant work in the native format directly. Nobody has to live inside a twenty-year-old XML specification to get modern agent behavior.

When Not to Reach for This

A process is the wrong tool when:

The whole task is a single AI call. Use an interaction.

The flow is straight-line and needs no state. Use a workflow.

The user wants an open-ended chat and there is no fixed sequence. Use an agent.

The sequence is long but purely deterministic (ETL, file processing, retry fan-out). Use a workflow.

Reach for a process when you have branching, state that accumulates, human gates, and at least one step where agentic reasoning earns its keep. That is the sweet spot. Everything else is overkill or underkill.

What Actually Changed

“Agent-first” is a phrase that has become cheap. Every workflow vendor now has an “AI mode” and calls the result agent-first. Most of them are workflow engines with an AI node — the first failure mode this essay opened with, rebranded.

What qualifies as first-principles AI-native design is whether the primitives themselves changed because agents exist. It is the difference between painting the house and rebuilding the foundation.

Four primitives carry the argument:

Format changed. Visually neutral, strictly validated, execution-shaped — because the authoring surface is an AI model, not a canvas.

Writes changed. Every node declares the fields it may modify; agent nodes have their

result_schemabuilt from that declaration — because agents are non-deterministic emitters that need an enforced bound on what they can change.Transitions changed. Explicit triggers (

auto,agent,user) with_next_nodeenums handed to the model — because agents routing on prose is not a feature, it is a bug.Supervision invented. An optional, validated, commandable AI seat above the engine, constrained by the process definition — because there was no widespread precedent for it.

Tools as process primitives, MCP as a governed substrate, the AI-assisted wiring loop, the choice between tool-node and agent-node execution, the Temporal floor — all of those are derivable from the four above. They follow from the same instinct. If agents are going to carry weight in the workflow, the contract has to carry it too.

What ties it all together

You can name the parts: an assistant, agents, tools, MCP, BPMN import, human tasks, durable execution. Most platforms will eventually assemble that list. The list is not the architecture.

What makes it an architecture is that everything in it operates under one process contract — typed context, declared writes, explicit transitions, immutable snapshots, scoped tools, validated supervisor commands, shared observability. One contract. One trust anchor. One audit trail.

This becomes especially important as MCP becomes the default way applications expose themselves to AI systems. If every MCP tool only enters through a chat agent, the enterprise gets a larger tool menu but not a governed process. Vertesia takes a different path: MCP capabilities become part of the process substrate. They can be wired directly, exposed to agents, scoped to nodes, observed in run history, and constrained by the same writes and transition model as everything else. We are not just adopting MCP. We are making MCP operationally useful inside enterprise processes.

That is the synthesis. Without it, you have a feature list. With it, you have a process engine.

I have not seen another platform make all three seats — author, worker, and supervisor — first-class in the same process runtime, with agents, tools, human tasks, MCP-enabled capabilities, durable execution, and typed process control under one contract. That may change. It should change — the enterprise needs more than one option here. Until it does, this is the architecture I think the category is moving toward: a process engine that takes AI seriously enough to redesign itself around it, not bolt it on.

Closing

We didn’t set out to build a new process engine. We set out to run real agents, in real production, against real enterprise work — contract review, invoice approval, vendor onboarding, the same list the category has been chasing for twenty years.

Every serious use case dragged us to the same place. The flow needed to be deterministic in the parts that mattered, and agentic in the parts that earned it, and the two had to share a runtime without the seams showing.

The design followed. The writes contract, the supervisor seat, the agent-authored definition, the shared tool layer, the BPMN import path, the durable substrate — none of those are isolated features. They are what fell out of taking the third actor seriously.

The engine is not an agent. It is the boundary that lets agents reason, act, and supervise without making them responsible for the invariants the business needs enforced.

This essay is one half of a pair. The companion piece, The Repository Reads Itself, about a new Content Repository, build for agents, created for the same reason: once agents can read, the repository has to be designed for them.

Subscribe for the rest of the architecture series I’m writing from what we’re building at Vertesia.